Introduction

The EU AI Act is a landmark regulation by the European Union, set to reshape the landscape of artificial intelligence (AI) usage within and beyond its borders. This act introduces a robust legal framework to ensure AI systems are safe, ethical, and transparent. The ramifications of this regulation extend globally, influencing not only European companies but also enterprises worldwide that interact with the EU market. This article provides a comprehensive overview of the EU AI Act and discusses its implications for companies developing and utilizing AI tools outside the EU territory.

Understanding the EU AI Act

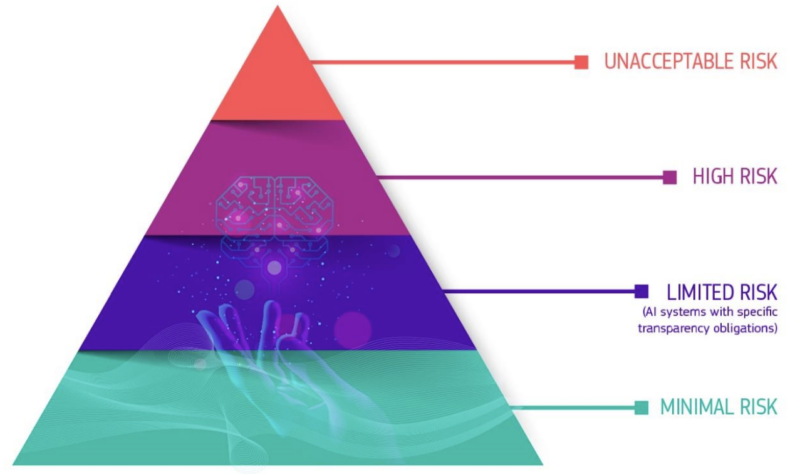

The EU AI Act is a regulatory framework that classifies AI systems based on the risk they pose.

Key Features

- Risk-Based Classification: AI systems are categorized based on the risk they pose—from unacceptable risk to minimal risk. High-risk applications, such as those impacting health, safety, or fundamental rights, face stringent operational requirements.

- Prohibited Practices: The Act bans specific AI uses outright, including real-time biometric recognition and AI that manipulates vulnerable groups.

- Transparency Requirements: All AI systems must disclose their capabilities and limitations, ensuring users are fully informed about the technology they interact with.

Compliance Requirements

The EUJ AI Act has important implications for enterprises.

- Documentation and Reporting: Enterprises must maintain detailed records demonstrating compliance with the act’s stipulations, from data handling to system capabilities.

- Risk Mitigation Measures: High-risk AI systems require rigorous testing and risk assessment procedures before deployment.

- Incident Reporting: Enterprises must promptly report any serious incidents related to high-risk AI systems to the relevant EU authorities.

The Pyramid of Criticality for AI Systems

Implications for Non-EU Enterprises

For AI Developers

As an AI developer, it is essential to note that your AI systems must comply with the EU AI Act’s strict requirements to access the EU market. This compliance may require significant adjustments to your system design and deployment strategy.

- Market Access. To access the EU market, developers must ensure their AI systems comply with the EU AI Act’s stringent requirements, which may necessitate significant system design and deployment strategy adjustments.

- Increased Costs. Compliance with the act may increase operational costs due to the need for enhanced testing, documentation, and adherence to strict governance standards.

- Innovation Impact. While aiming to ensure safety and ethical standards, the rigorous requirements could slow down the innovation cycle for developers targeting the EU market.

For AI Users

For AI users, it is crucial to carefully assess vendors to ensure their AI tools comply with EU regulations. Enterprises may need to modify AI-powered processes to align with the act’s transparency and data governance requirements.

- Vendor Assessment. Companies using AI tools must carefully assess their vendors to ensure their technology complies with EU regulations.

- Operational Changes. Enterprises may need to modify AI-powered processes to align with the act’s transparency and data governance requirements.

- Training and Development. Under the new regulatory framework, companies must invest in training their workforce to understand and effectively implement AI systems.

Companies outside the European Union that conduct business in Europe or have customers in Europe may be subject to substantial financial penalties if they do not comply with the AI regulations. This is similar to the consequences of non-compliance with GDPR, the European privacy law.

Strategic Adjustments and Opportunities

There are several opportunities that businesses can take advantage of by adapting to the EU AI Act early.

- Early Compliance. Enterprises that adapt early to the EU AI Act can position themselves as market leaders in responsible AI practices, potentially gaining a competitive advantage.

- Innovation in Compliance Solutions. There is a significant opportunity for developing innovative solutions that help other companies achieve compliance efficiently and cost-effectively.

- Partnership and Collaboration. Building alliances with European tech firms can facilitate smoother adaptation to the regulatory requirements, benefiting from their experience and established compliance mechanisms.

Future Outlook: The Horizon of AI Regulation

Looking toward the future, the EU AI Act is likely the beginning of global regulatory efforts to oversee AI technologies. Like the General Data Protection Regulation (GDPR), the AI Act could become a template for future AI laws worldwide. This presents a dual challenge and opportunity for global enterprises:

- Global Harmonization. Enterprises must prepare for a future where similar regulations may be implemented in other regions, necessitating a harmonized approach to AI governance.

- Leadership in Ethical AI. Companies that embrace these regulations and lead in ethical AI practices will likely excel, building trust with consumers and regulators.

Conclusions

In conclusion, while the EU AI Act presents new challenges, it also offers a global framework for safer and more responsible AI development and usage. Enterprises that proactively adapt to these regulations will not only comply with the law but also lead the way in the ethical application of artificial intelligence. The path forward involves embracing transparency, responsibility, and a commitment to ethical standards. All those improvements will pave the way for a future where AI contributes positively to society under robust and thoughtful regulation.